Pyspark withColumn() function is useful in creating, transforming existing pyspark dataframe columns or changing the data type of column. In this article, we will see all the most common usages of withColumn() function.

2. Pyspark withColumn() –

In order to demonstrate the complete functionality, we will create a dummy Pyspark dataframe and secondly, we will explore the functionalities and concepts.

import pyspark

from pyspark.sql import SparkSession

records = [

(4,"Charlee","2005","60",35000),

(5,"Guo","2010","40",38000)]

record_Columns = ["seq","Name","joining_year", "specialization_id","salary"]

sampleDF = spark.createDataFrame(data=records, schema = record_Columns)

sampleDF.show(truncate=False)2.1 Changing datatype for column –

As we have specialization_id as Integer format, suppose you want to transform the same into float type. You may use the withColumn() function for the same.

sampleDF.withColumn("specialization_id ",col("specialization_id ").cast("Float")).show()Note – Do not forget to import this before running the above lines.

from pyspark.sql.functions import colOutput –

2.2 Transformation of existing column using withColumn() –

Suppose you want to divide or multiply the existing column with some other value, Please use withColumn function. Here is the code for this-

sampleDF.withColumn("specialization_id_modified",col("specialization_id")* 2).show()

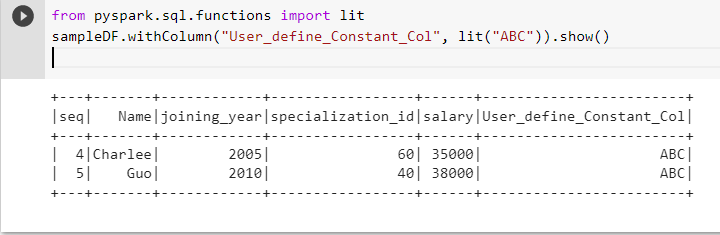

2.3 Creating new column in Pyspark dataframe using constant value –

Firstly, Before we operate it further, you need to import the lit module for the same. Let’s directly run the code and taste the water. Actually, it is self-explanatory stuff.

from pyspark.sql.functions import lit

sampleDF.withColumn("User_define_Constant_Col", lit("ABC")).show()

2.4 Renaming column using Pyspark –

Actually it is not exactly withColumn() but withColumnRename() , Lets see the example-

Above all, I hope you must have liked this article on withColumn(). This is one of the useful functions in Pyspark which every developer/data engineer. Please write back to us if you have any concerns related to withColumn() function, You may also comment below in the comment box.

In addition, please subscribe us for more articles on the same topic on Pyspark , data engineering, and data science.

Thanks

Data Science Learner Team

Join our list

Subscribe to our mailing list and get interesting stuff and updates to your email inbox.